The Hidden Cost of Speed: When Fast Connections Become Fragile

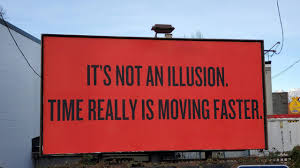

There’s a reason IT teams obsess over speed metrics. Low latency, fat pipes, instant page loads. These numbers look great in reports and they’re easy to brag about in standups.

But here’s what nobody puts on the dashboard: how quickly that fast connection falls apart when something goes wrong. And something always goes wrong.

Fast Usually Means Fragile

Most teams build their infrastructure the same way. Find the absolute quickest path, shove everything through it, move on to the next project. It works perfectly for six months, maybe a year, and then one Tuesday morning at 7 a.m. it doesn’t.

The problem is concentration. When you optimize for speed, you naturally funnel traffic into fewer and faster routes.

One blazing-fast datacenter proxy in Virginia, one provider with incredible peering agreements, one region where latency sits at a gorgeous 30 milliseconds. That’s also one thing that can break and take your whole operation down with it.

Anyone who’s built a web scraping pipeline or a price monitoring system has lived through this at least once. The question of is reliability different between datacenter and residential proxies? comes up constantly in these conversations, because the answer actually matters when you’re choosing between a connection that’s fast today and one that’s still working next Thursday.

What Downtime Actually Costs

Gartner’s old estimate of $5,600 per minute of IT downtime still gets cited everywhere, and honestly, it’s probably low at this point. Splunk and Oxford Economics ran a study in 2022 showing Global 2000 companies lose a combined $400 billion a year to unplanned outages.

Those are the big, dramatic failures though. The sneaky ones are worse in some ways.

A proxy pool that quietly starts returning stale data. A CDN edge node that drops 3% of requests for two hours before anyone notices. An automation script that reruns successfully but with garbage inputs.

Nobody files an incident report for those. They just show up as weird data gaps three days later, and then someone spends a full afternoon figuring out what happened.

Why Teams Keep Making the Same Mistake

It’s a measurement problem, mostly. Teams get evaluated on throughput and response times, not on what happens when things go sideways.

Redundancy feels wasteful when everything’s running fine. Why pay for backup proxy pools or spread traffic across multiple regions when your current setup hits 25-millisecond response times? Adding a second provider that runs at 60 milliseconds looks like a downgrade on paper.

Harvard Business Review covered this gap after back-to-back cloud platform outages in late 2025. A lot of companies assumed their providers had resilience handled. They didn’t, and the ones without backup plans paid for it.

The networking world figured this out ages ago, by the way. The entire concept of a single point of failure goes back to the design of ARPANET, where Baran and Davies built packet switching specifically so no single broken node could kill the whole network.

We’ve had the playbook for 50 years. Most proxy setups still ignore it.

What Actually Works

You don’t have to sacrifice speed to get reliability. You just have to stop pretending speed is the only thing that counts.

Rotating requests across a bigger proxy pool adds maybe 15 to 20 milliseconds of latency on some requests. That’s nothing for most use cases. But it means one blocked IP or one provider hiccup doesn’t crater your entire data collection run.

Spreading infrastructure across two or three countries helps too. Cloudflare’s documentation on latency points out that 50 to 100 extra milliseconds barely registers for most web applications. You’re trading a delay humans can’t even perceive for protection against regional outages.

And here’s a simple test most teams skip: pull one of your proxy pools offline on purpose during a quiet period. See what breaks. You’ll learn more in that hour than from six months of uptime dashboards.

The Boring Competitive Advantage

Reliability isn’t exciting. Nobody’s writing blog posts about their 99.5% success rate with the same enthusiasm they bring to a sub-20-millisecond response time.

But when a competitor’s pipeline goes down because their single proxy provider had a rough afternoon, the team with fallback infrastructure just keeps collecting data. That gap compounds fast. Cleaner datasets, fewer fire drills, and nobody getting paged at 2 a.m. on a Saturday.

Speed you can’t count on isn’t really speed. It’s a bet, and eventually the odds catch up.